备注

Go to the end 下载完整的示例代码。或者通过浏览器中的MysterLite或Binder运行此示例

概率校准曲线#

在进行分类时,人们通常不仅要预测类别标签,还要预测相关的概率。这个概率给出了预测的某种置信度。此示例演示如何使用校准曲线(也称为可靠性图)可视化预测概率的校准程度。还将演示未校准分类器的校准。

# Authors: The scikit-learn developers

# SPDX-License-Identifier: BSD-3-Clause

数据集#

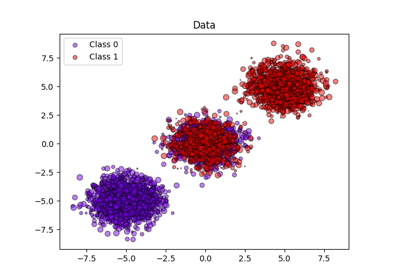

我们将使用具有100,000个样本和20个特征的合成二元分类数据集。在20个特征中,只有2个是信息性的,10个是多余的(信息性特征的随机组合),其余8个是无信息性的(随机数)。在100,000个样本中,1,000个将用于模型匹配,其余用于测试。

from sklearn.datasets import make_classification

from sklearn.model_selection import train_test_split

X, y = make_classification(

n_samples=100_000, n_features=20, n_informative=2, n_redundant=10, random_state=42

)

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.99, random_state=42

)

校准曲线#

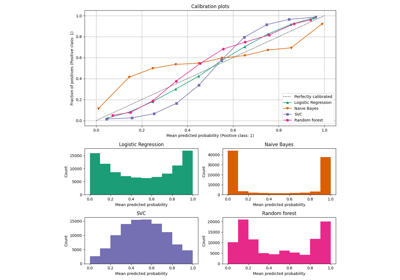

高斯天真的Bayes#

首先,我们将比较:

LogisticRegression(used作为基线,因为通常,由于使用了对数损失,适当正则化的逻辑回归在默认情况下得到了很好的校准)未校准

GaussianNBGaussianNB具有等张和S形校准(请参阅 User Guide )

下面绘制了所有4种条件的校准曲线,x轴上是每个箱的平均预测概率,y轴上是每个箱中阳性类别的分数。

import matplotlib.pyplot as plt

from matplotlib.gridspec import GridSpec

from sklearn.calibration import CalibratedClassifierCV, CalibrationDisplay

from sklearn.linear_model import LogisticRegression

from sklearn.naive_bayes import GaussianNB

lr = LogisticRegression(C=1.0)

gnb = GaussianNB()

gnb_isotonic = CalibratedClassifierCV(gnb, cv=2, method="isotonic")

gnb_sigmoid = CalibratedClassifierCV(gnb, cv=2, method="sigmoid")

clf_list = [

(lr, "Logistic"),

(gnb, "Naive Bayes"),

(gnb_isotonic, "Naive Bayes + Isotonic"),

(gnb_sigmoid, "Naive Bayes + Sigmoid"),

]

fig = plt.figure(figsize=(10, 10))

gs = GridSpec(4, 2)

colors = plt.get_cmap("Dark2")

ax_calibration_curve = fig.add_subplot(gs[:2, :2])

calibration_displays = {}

for i, (clf, name) in enumerate(clf_list):

clf.fit(X_train, y_train)

display = CalibrationDisplay.from_estimator(

clf,

X_test,

y_test,

n_bins=10,

name=name,

ax=ax_calibration_curve,

color=colors(i),

)

calibration_displays[name] = display

ax_calibration_curve.grid()

ax_calibration_curve.set_title("Calibration plots (Naive Bayes)")

# Add histogram

grid_positions = [(2, 0), (2, 1), (3, 0), (3, 1)]

for i, (_, name) in enumerate(clf_list):

row, col = grid_positions[i]

ax = fig.add_subplot(gs[row, col])

ax.hist(

calibration_displays[name].y_prob,

range=(0, 1),

bins=10,

label=name,

color=colors(i),

)

ax.set(title=name, xlabel="Mean predicted probability", ylabel="Count")

plt.tight_layout()

plt.show()

未校准 GaussianNB 由于冗余特征违反了特征独立性的假设并导致过度自信的分类器,因此校准不良,这由典型的转置S形曲线表示。概率的校准 GaussianNB 与 保序回归 可以解决这个问题,正如从几乎对角线的校准曲线可以看出的那样。 Sigmoid regression 也稍微改善了校准,尽管不如非参数等张回归那么强烈。这可以归因于我们有大量的校准数据,因此可以利用非参数模型的更大灵活性。

下面我们将考虑几个分类指标进行定量分析: Brier score loss , 对数损失 , precision, recall, F1 score 和 ROC AUC .

from collections import defaultdict

import pandas as pd

from sklearn.metrics import (

brier_score_loss,

f1_score,

log_loss,

precision_score,

recall_score,

roc_auc_score,

)

scores = defaultdict(list)

for i, (clf, name) in enumerate(clf_list):

clf.fit(X_train, y_train)

y_prob = clf.predict_proba(X_test)

y_pred = clf.predict(X_test)

scores["Classifier"].append(name)

for metric in [brier_score_loss, log_loss, roc_auc_score]:

score_name = metric.__name__.replace("_", " ").replace("score", "").capitalize()

scores[score_name].append(metric(y_test, y_prob[:, 1]))

for metric in [precision_score, recall_score, f1_score]:

score_name = metric.__name__.replace("_", " ").replace("score", "").capitalize()

scores[score_name].append(metric(y_test, y_pred))

score_df = pd.DataFrame(scores).set_index("Classifier")

score_df.round(decimals=3)

score_df

请注意,尽管校准改善了 Brier score loss (由校准项和细化项组成的指标)和 对数损失 ,它不会显着改变预测准确性指标(精确度、召回率和F1评分)。这是因为校准不应显着改变决策阈值位置(图表上x = 0.5)的预测概率。然而,校准应该使预测概率更加准确,从而更有助于在不确定性下做出分配决策。此外,ROC AUC根本不应该改变,因为校准是单调变换。事实上,排名指标不会受到校准的影响。

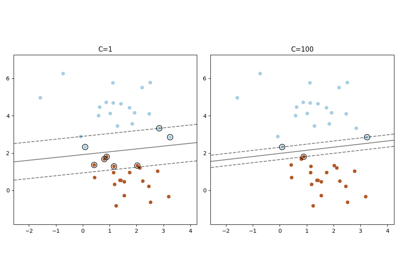

线性支持载体分类器#

接下来,我们将比较:

LogisticRegression(基线)未校准

LinearSVC.由于CSV默认不输出概率,因此我们天真地缩放 decision_function 成 [0, 1] 通过应用最小-最大缩放。LinearSVC具有等张和S形校准(请参阅 User Guide )

import numpy as np

from sklearn.svm import LinearSVC

class NaivelyCalibratedLinearSVC(LinearSVC):

"""LinearSVC with `predict_proba` method that naively scales

`decision_function` output for binary classification."""

def fit(self, X, y):

super().fit(X, y)

df = self.decision_function(X)

self.df_min_ = df.min()

self.df_max_ = df.max()

def predict_proba(self, X):

"""Min-max scale output of `decision_function` to [0, 1]."""

df = self.decision_function(X)

calibrated_df = (df - self.df_min_) / (self.df_max_ - self.df_min_)

proba_pos_class = np.clip(calibrated_df, 0, 1)

proba_neg_class = 1 - proba_pos_class

proba = np.c_[proba_neg_class, proba_pos_class]

return proba

lr = LogisticRegression(C=1.0)

svc = NaivelyCalibratedLinearSVC(max_iter=10_000)

svc_isotonic = CalibratedClassifierCV(svc, cv=2, method="isotonic")

svc_sigmoid = CalibratedClassifierCV(svc, cv=2, method="sigmoid")

clf_list = [

(lr, "Logistic"),

(svc, "SVC"),

(svc_isotonic, "SVC + Isotonic"),

(svc_sigmoid, "SVC + Sigmoid"),

]

fig = plt.figure(figsize=(10, 10))

gs = GridSpec(4, 2)

ax_calibration_curve = fig.add_subplot(gs[:2, :2])

calibration_displays = {}

for i, (clf, name) in enumerate(clf_list):

clf.fit(X_train, y_train)

display = CalibrationDisplay.from_estimator(

clf,

X_test,

y_test,

n_bins=10,

name=name,

ax=ax_calibration_curve,

color=colors(i),

)

calibration_displays[name] = display

ax_calibration_curve.grid()

ax_calibration_curve.set_title("Calibration plots (SVC)")

# Add histogram

grid_positions = [(2, 0), (2, 1), (3, 0), (3, 1)]

for i, (_, name) in enumerate(clf_list):

row, col = grid_positions[i]

ax = fig.add_subplot(gs[row, col])

ax.hist(

calibration_displays[name].y_prob,

range=(0, 1),

bins=10,

label=name,

color=colors(i),

)

ax.set(title=name, xlabel="Mean predicted probability", ylabel="Count")

plt.tight_layout()

plt.show()

LinearSVC 表现出相反的行为 GaussianNB ;校准曲线具有Sigmoid形状,这对于信心不足的分类器来说是典型的。的情况下 LinearSVC 这是由铰链损失的裕度属性引起的,其集中在接近决策边界(支持向量)的样本上。远离决策边界的样本不会影响铰链损失。因此, LinearSVC 不尝试分离高置信度区域中的样本。这导致在0和1附近的校准曲线更平坦,并且在Niculescu-Mizil & Caruana中的各种数据集上以经验显示 [1].

两种校准(S型和等张)都可以解决这个问题并产生类似的结果。

与之前一样,我们展示了 Brier score loss , 对数损失 , precision, recall, F1 score 和 ROC AUC .

scores = defaultdict(list)

for i, (clf, name) in enumerate(clf_list):

clf.fit(X_train, y_train)

y_prob = clf.predict_proba(X_test)

y_pred = clf.predict(X_test)

scores["Classifier"].append(name)

for metric in [brier_score_loss, log_loss, roc_auc_score]:

score_name = metric.__name__.replace("_", " ").replace("score", "").capitalize()

scores[score_name].append(metric(y_test, y_prob[:, 1]))

for metric in [precision_score, recall_score, f1_score]:

score_name = metric.__name__.replace("_", " ").replace("score", "").capitalize()

scores[score_name].append(metric(y_test, y_pred))

score_df = pd.DataFrame(scores).set_index("Classifier")

score_df.round(decimals=3)

score_df

如同 GaussianNB 以上,校准改善了两者 Brier score loss 和 对数损失 但不会太大改变预测准确性指标(精确度、召回率和F1得分)。

总结#

参数Sigmoid校准可以处理基本分类器的校准曲线为Sigmoid的情况(例如,为 LinearSVC )但不是在它被调换的位置-S形(例如, GaussianNB ).非参数等张校准可以处理这两种情况,但可能需要更多数据才能产生良好的结果。

引用#

Total running time of the script: (0分1.955秒)

相关实例

Gallery generated by Sphinx-Gallery <https://sphinx-gallery.github.io> _