备注

Go to the end 下载完整的示例代码。或者通过浏览器中的MysterLite或Binder运行此示例

使用完全随机树哈希特征转换#

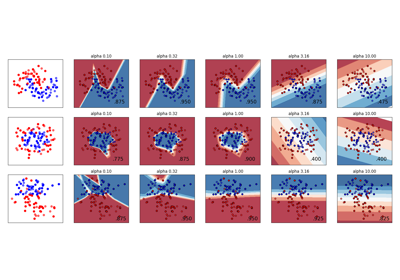

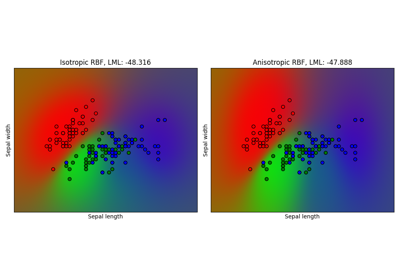

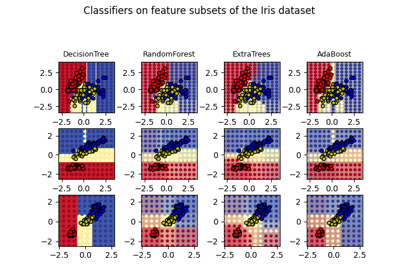

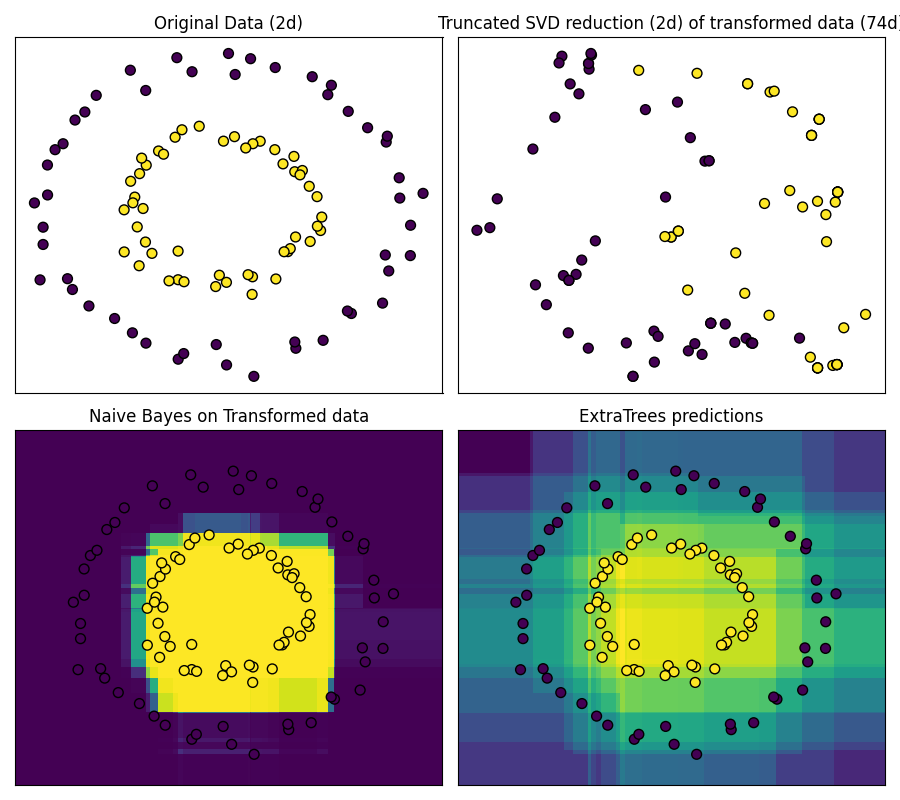

RandomTreesEmbedding提供了一种将数据映射到非常高维度、稀疏表示的方法,这可能有利于分类。映射是完全无监督的,并且非常高效。

此示例可视化了由几棵树给出的分区,并展示了如何将转换用于非线性降维或非线性分类。

相邻的点通常共享同一棵树的同一片叶子,因此共享其哈希表示的大部分。这允许简单地根据具有截断的奇异值的转换数据的主成分来分离两个同心圆。

在多维空间中,线性分类器通常能够实现出色的准确性。对于稀疏二进制数据,BernoulliNB特别适合。最下面一行将BernoulliNB在转换后的空间中获得的决策边界与在原始数据上学习的ExtraTreesClassifier森林进行比较。

# Authors: The scikit-learn developers

# SPDX-License-Identifier: BSD-3-Clause

import matplotlib.pyplot as plt

import numpy as np

from sklearn.datasets import make_circles

from sklearn.decomposition import TruncatedSVD

from sklearn.ensemble import ExtraTreesClassifier, RandomTreesEmbedding

from sklearn.naive_bayes import BernoulliNB

# make a synthetic dataset

X, y = make_circles(factor=0.5, random_state=0, noise=0.05)

# use RandomTreesEmbedding to transform data

hasher = RandomTreesEmbedding(n_estimators=10, random_state=0, max_depth=3)

X_transformed = hasher.fit_transform(X)

# Visualize result after dimensionality reduction using truncated SVD

svd = TruncatedSVD(n_components=2)

X_reduced = svd.fit_transform(X_transformed)

# Learn a Naive Bayes classifier on the transformed data

nb = BernoulliNB()

nb.fit(X_transformed, y)

# Learn an ExtraTreesClassifier for comparison

trees = ExtraTreesClassifier(max_depth=3, n_estimators=10, random_state=0)

trees.fit(X, y)

# scatter plot of original and reduced data

fig = plt.figure(figsize=(9, 8))

ax = plt.subplot(221)

ax.scatter(X[:, 0], X[:, 1], c=y, s=50, edgecolor="k")

ax.set_title("Original Data (2d)")

ax.set_xticks(())

ax.set_yticks(())

ax = plt.subplot(222)

ax.scatter(X_reduced[:, 0], X_reduced[:, 1], c=y, s=50, edgecolor="k")

ax.set_title(

"Truncated SVD reduction (2d) of transformed data (%dd)" % X_transformed.shape[1]

)

ax.set_xticks(())

ax.set_yticks(())

# Plot the decision in original space. For that, we will assign a color

# to each point in the mesh [x_min, x_max]x[y_min, y_max].

h = 0.01

x_min, x_max = X[:, 0].min() - 0.5, X[:, 0].max() + 0.5

y_min, y_max = X[:, 1].min() - 0.5, X[:, 1].max() + 0.5

xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))

# transform grid using RandomTreesEmbedding

transformed_grid = hasher.transform(np.c_[xx.ravel(), yy.ravel()])

y_grid_pred = nb.predict_proba(transformed_grid)[:, 1]

ax = plt.subplot(223)

ax.set_title("Naive Bayes on Transformed data")

ax.pcolormesh(xx, yy, y_grid_pred.reshape(xx.shape))

ax.scatter(X[:, 0], X[:, 1], c=y, s=50, edgecolor="k")

ax.set_ylim(-1.4, 1.4)

ax.set_xlim(-1.4, 1.4)

ax.set_xticks(())

ax.set_yticks(())

# transform grid using ExtraTreesClassifier

y_grid_pred = trees.predict_proba(np.c_[xx.ravel(), yy.ravel()])[:, 1]

ax = plt.subplot(224)

ax.set_title("ExtraTrees predictions")

ax.pcolormesh(xx, yy, y_grid_pred.reshape(xx.shape))

ax.scatter(X[:, 0], X[:, 1], c=y, s=50, edgecolor="k")

ax.set_ylim(-1.4, 1.4)

ax.set_xlim(-1.4, 1.4)

ax.set_xticks(())

ax.set_yticks(())

plt.tight_layout()

plt.show()

Total running time of the script: (0分0.252秒)

相关实例

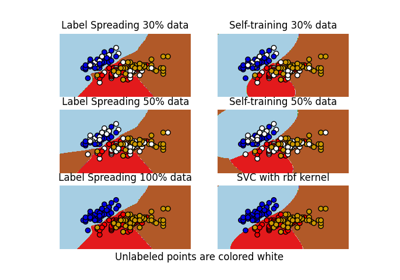

Decision boundary of semi-supervised classifiers versus SVM on the Iris dataset

Gallery generated by Sphinx-Gallery <https://sphinx-gallery.github.io> _