10.1. 了解k近邻¶

10.1.1. 目标¶

在本章中,我们将了解k近邻(kNN)算法的概念。

10.1.2. 理论¶

kNN是监督学习中最简单的分类算法之一。其思想是在特征空间中寻找测试数据的最接近匹配。我们将用下图来研究它。

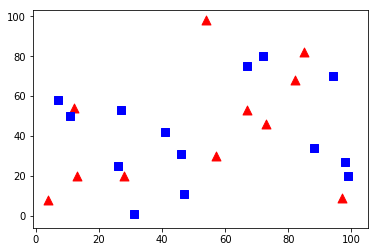

在图片中,有两个家庭, \(Blue Squares and Red Triangles\) . 我们称每个家庭为 等级 . 他们的房子显示在我们称之为城镇地图上 \(feature space\) . (You can consider a feature space as a space where all datas are projected. For example, consider a 2D coordinate space. Each data has two features, x and y coordinates. You can represent this data in your 2D coordinate space, right? Now imagine if there are three features, you need 3D space. Now consider N features, where you need N-dimensional space, right? This N-dimensional space is its feature space. In our image, you can consider it as a 2D case with two features) .

现在,一个新成员来到镇上,创建了一个新家,显示为绿色圆圈。他应该加入这些蓝/红家庭。我们称之为过程, 分类 . 我们做什么?既然我们是在处理kNN,让我们应用这个算法。

一种方法是检查谁是他最近的邻居。从图片上看,很明显是红三角家族。所以他也加入了红三角。这个方法被简单地称为 最近的邻居 ,因为分类仅依赖于最近的邻居。

但这也有问题。红色三角形可能是最近的。但是如果他身边有很多蓝色的方块呢?那么蓝色的正方形在那个地方比红色的三角形更有力量。所以仅仅检查最近的一个是不够的。相反,我们检查一些 \(k\) 最近的家庭。不管是谁占多数,新来的人都属于那个家庭。以我们的形象,让我们 \(k=3\) ,即3个最近的家庭。他有两个红色和一个蓝色(有两个蓝色是等距的,但是因为k=3,我们只取其中一个),所以他应该再次加入红色家族。但是如果我们 \(k=7\) ? 然后他有5个蓝色家庭和2个红色家庭。伟大的!!现在他应该加入蓝色家族了。所以这一切都随着k值的变化。更有趣的是,如果 \(k = 4\) ? 他有两个红邻居和两个蓝邻居。这是一条领带!!!所以最好把k当作奇数。所以这个方法叫做 k-Nearest Neighbour 因为分类依赖于k近邻。

同样,在kNN,我们确实在考虑k邻居,但我们同样重视所有人,对吧?这公平吗?例如,以 \(k=4\) . 我们说是领带。但是看,两个红色家庭比其他两个蓝色家庭更接近他。所以他更有资格加入红色。那么我们如何从数学上解释呢?我们根据每个家庭与新来的人的距离给他们一些权重。离他近的人得更重,离他远的人得更轻。然后我们分别加上每个家庭的总权重。无论谁的总重量最高,新来的人都会去那个家。这叫做 改进的kNN .

你在这里看到了什么重要的东西?

你需要知道镇上所有的房子,对吧?因为,我们必须检查从新来的人到所有现有房屋的距离,才能找到最近的邻居。如果有足够的房子和家庭,它需要大量的记忆,也需要更多的时间来计算。

几乎没有时间进行任何训练或准备。

现在让我们在OpenCV中看到它。

10.1.3. OpenCV中的kNN¶

我们将在这里做一个简单的例子,有两个家庭(类),就像上面一样。在下一章中,我们将做更多更好的例子。

所以在这里,我们将红色家族标记为 Class-0 (用0表示)蓝色家族 Class-1 (用1表示)。我们创建了25个家庭或25个培训数据,并将它们标记为0级或1级。所有这些都是借助于Numpy中的随机数发生器来完成的。

然后在Matplotlib的帮助下绘制。红色族显示为红色三角形,蓝色族显示为蓝色正方形。

>>> %matplotlib inline

>>>

>>> import cv2

>>> import numpy as np

>>> import matplotlib.pyplot as plt

>>>

>>> # Feature set containing (x,y) values of 25 known/training data

>>> trainData = np.random.randint(0,100,(25,2)).astype(np.float32)

>>>

>>> # Labels each one either Red or Blue with numbers 0 and 1

>>> responses = np.random.randint(0,2,(25,1)).astype(np.float32)

>>>

>>> # Take Red families and plot them

>>> red = trainData[responses.ravel()==0]

>>> plt.scatter(red[:,0],red[:,1],80,'r','^')

>>>

>>> # Take Blue families and plot them

>>> blue = trainData[responses.ravel()==1]

>>> plt.scatter(blue[:,0],blue[:,1],80,'b','s')

>>>

>>> plt.show()

你会得到与我们第一张照片相似的东西。因为您使用的是随机数生成器,所以每次运行代码时您将得到不同的数据。

接下来启动kNN算法并通过 \(trainData\) 和 \(responses\) 训练kNN(它构造一个搜索树)。

然后我们会带来一个新来的人,并在OpenCV的kNN帮助下将他归为一个家庭。在去kNN之前,我们需要知道一些关于我们的测试数据(新来者的数据)。我们的数据应该是一个大小为 \(number \; of \; testdata \times number \; of \; features\) . 然后我们找到新来的邻居。我们可以指定我们想要多少邻居。它返回:

给新来者的标签取决于我们之前看到的kNN理论。如果需要近邻算法,只需指定 \(k=1\) 其中k是邻居的数目。

k近邻的标签。

从新来者到每个最近邻居的相应距离。

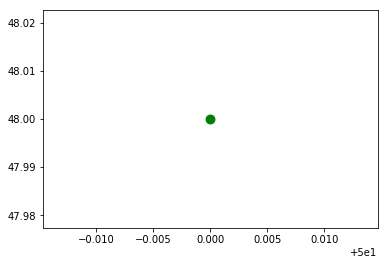

所以让我们看看它是如何工作的。新来的人被标成绿色。

>>> newcomer = np.random.randint(0,100,(1,2)).astype(np.float32)

>>> plt.scatter(newcomer[:,0],newcomer[:,1],80,'g','o')

>>> # knn = cv2.knn()

>>> # knn = cv2.KNearest()

>>> # https://blog.csdn.net/zhangpan929/article/details/86217374

>>> knn = cv2.ml.KNearest_create()

>>> # knn.train(trainData,responses)

>>> knn.train(trainData, cv2.ml.ROW_SAMPLE, responses)

>>> # ret, results, neighbours ,dist = knn.find_nearest(newcomer, 3)

>>> ret, results, neighbours ,dist = knn.findNearest(newcomer, 3)

>>>

>>> print ("result: ", results,"\n")

>>> print ("neighbours: ", neighbours,"\n")

>>> print ("distance: ", dist)

>>>

>>> plt.show()

result: [[1.]]

neighbours: [[1. 1. 0.]]

distance: [[117. 305. 314.]]

结果如下:

result: [[ 1.]]

neighbours: [[ 1. 1. 1.]]

distance: [[ 53. 58. 61.]]

上面说我们新来的人有三个邻居,都是蓝家人。因此,他被称为蓝色家族。从下图可以明显看出:

如果有大量数据,可以将其作为数组传递。以阵列形式给出了相应的结果。

>>> # 10 new comers

>>> newcomers = np.random.randint(0,100,(10,2)).astype(np.float32)

>>> ret, results,neighbours,dist = knn.findNearest(newcomer, 3)

>>> # The results also will contain 10 labels.